Apache lucene relevance models1/7/2024

If you’d like our help with your Solr project get in touch today. Our Solr consulting experts know how to get the best out of this powerful technology – and via our Proven Process we can empower your team to deliver the value your business requires. Our free Relevance Slack community has over 2000 members and a dedicated #solr channel. We run the London Lucene/Solr Meetup and our Haystack conference series which focuses on relevance engineering. We teach the craft of Solr relevance engineering through our Think Like a Relevance Engineer Solr training, regular blogs and via our Youtube channel. We create and maintain open source plugins, extensions and tools for Solr including Quepid, the test-driven relevancy dashboard and a number of modules available on ol and our Github.

Our CEO Eric Pugh is a Solr Committer and co-authored the first book on Solr, Apache Solr Enterprise Search Server, now in its third edition. We’ve helped organizations across the world implement powerful, scalable and accurate search engines based on these open source platforms. We’ve been Solr consultants for over 15 years.

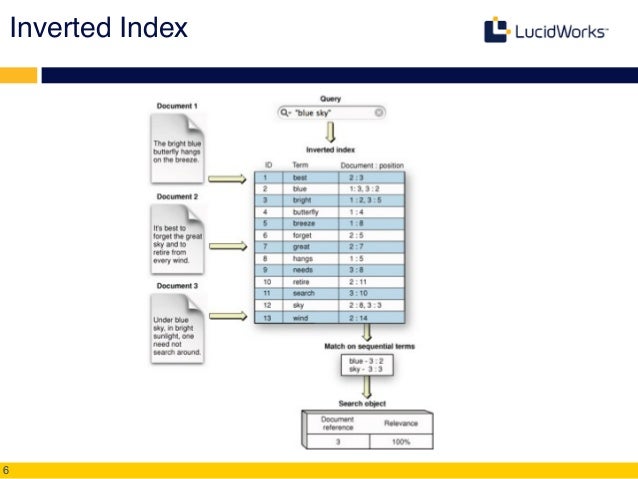

Solr is highly scalable, resilient and accurate. Key takeaways from the talk will be a thorough understanding of how to make Lucene powered search a first class citizen to build interactive machine learning pipelines using Spark Mllib.Apache Solr, based on Apache Lucene and available under the open source Apache license, powers search for some of the world’s largest organisations including Bloomberg, Best Buy, Sears, Instagram and AOL. We will show algorithmic details of our model building pipelines that are employed for performance optimization and demonstrate latency of the APIs on a suite of queries generated over 1M+ terms. To make DFR pass at all, I changed SimilarityBase to use double everywhere internally, then cast to 32-bit float at the end. In this talk we will demonstrate LuceneDAO write and read performance on millions of documents with 1M+ terms. scoring models without warnings in the javadocs pass: models G, I(F), I\(n), I(ne) ones with warnings in javadocs all fail: models BE, D, and P I think this is a good sign it works to do what we need. Our synchronous API uses Spark-as-a-Service while our asynchronous API uses kafka, spark streaming and HBase for maintaining job status and results. We developed Spark Mllib based estimators for classification and recommendation pipelines and use the vector space representation to train, cross-validate and score models to power synchronous and asynchronous APIs. LuceneDAO is used to load the shards to Spark executors and power sub-second distributed document retrieval for the queries. We used Spark as our distributed query processing engine where each query is represented as boolean combination over terms. Is it possible to configure Solr's More Like This feature to behave like More Like Those In other words: Given a set of documents, return. Solr already has a More Like This feature: Given a single document, return a set of similar documents ranked by similarity to the single input document. Lucene shards maintain the document-term view for search and vector space representation for machine learning pipelines. I would like to implement relevance feedback in Solr. We introduced LuceneDAO (data access object) that supports building distributed lucene shards from dataframe and save the shards to HDFS for query processors like SolrCloud. However when dealing with document datasets where the features are represented as variable number of columns in each document and use-cases demand searching over columns to retrieve documents and generate machine learning models on the documents retrieved in near realtime, a close integration within Spark and Lucene was needed. Spark SQL and Mllib are optimized for running feature extraction and machine learning algorithms on large columnar datasets through full scan.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed